Checkpoints

Create Documents from Vertex AI Cloud Documentation Site

/ 20

Generate embeddings from Document chunks

/ 20

Create a Vertex AI Vector Store index

/ 20

Search Vector Store, add result as context to a query (without using a LangChain)

/ 20

Create Retrieval Augmentation Generation application using LangChain

/ 20

Build a Knowledge Based System with Vertex AI Vector Search, LangChain and Gemini

GSP1235

Overview

Gemini is a family of generative AI models developed by Google DeepMind that is designed for multimodal use cases. The Gemini API gives you access to the Gemini Pro Vision and Gemini Pro models. In this lab, you use Vertex AI Vector Search to index documents and create a knowledge base. The knowledge base is utilized to retrieve relevant search results to supply with a query submitted to a large language model (LLM), in this case, Gemini, as context (a technique known as Retrieval Augmentation Generation).

This will in effect produce more specific results from the LLM and avoid re-training or fine-tuning an LLM with additional data.

Vertex AI Vector Search provides a high-scale, low latency vector database. Vector databases are commonly referred to as vector similarity-matching or an approximate nearest neighbor (ANN) service.

Vertex AI Vector Search can search from billions of semantically similar or semantically related items when queried. A vector similarity-matching service has many use cases such as implementing recommendation engines, search engines, chatbots, and text classification.

Semantic matching can be simplified into a few steps. First, you must generate embedding representations of many items (done outside of Vector Search). Next, you upload your embeddings to Google Cloud, and then link your data to Vector Search. After your embeddings are added to Vector Search, you can create an index to run queries to get recommendations or results.

Once the Vector Search index has been created it can be queried to return results which can be supplied to a Large Language Model (LLM) as context to generate better content using the results to without the need for fine-tuning the model which can be expensive or

Objectives

In this lab, you will:

- Generate embeddings for a dataset

- Add the embeddings to Cloud Storage

- Create an index in Vertex AI Vector Search

- Leverage similarity metrics to evaluate and retrieve the most relevant knowledge base results

- Utilize LangChain to query Vertex AI Vector Search and provide context to prompts submitted to Gemini

Setup and requirements

Before you click the Start Lab button

Read these instructions. Labs are timed and you cannot pause them. The timer, which starts when you click Start Lab, shows how long Google Cloud resources will be made available to you.

This hands-on lab lets you do the lab activities yourself in a real cloud environment, not in a simulation or demo environment. It does so by giving you new, temporary credentials that you use to sign in and access Google Cloud for the duration of the lab.

To complete this lab, you need:

- Access to a standard internet browser (Chrome browser recommended).

- Time to complete the lab---remember, once you start, you cannot pause a lab.

How to start your lab and sign in to the Google Cloud console

-

Click the Start Lab button. If you need to pay for the lab, a pop-up opens for you to select your payment method. On the left is the Lab Details panel with the following:

- The Open Google Cloud console button

- Time remaining

- The temporary credentials that you must use for this lab

- Other information, if needed, to step through this lab

-

Click Open Google Cloud console (or right-click and select Open Link in Incognito Window if you are running the Chrome browser).

The lab spins up resources, and then opens another tab that shows the Sign in page.

Tip: Arrange the tabs in separate windows, side-by-side.

Note: If you see the Choose an account dialog, click Use Another Account. -

If necessary, copy the Username below and paste it into the Sign in dialog.

{{{user_0.username | "Username"}}} You can also find the Username in the Lab Details panel.

-

Click Next.

-

Copy the Password below and paste it into the Welcome dialog.

{{{user_0.password | "Password"}}} You can also find the Password in the Lab Details panel.

-

Click Next.

Important: You must use the credentials the lab provides you. Do not use your Google Cloud account credentials. Note: Using your own Google Cloud account for this lab may incur extra charges. -

Click through the subsequent pages:

- Accept the terms and conditions.

- Do not add recovery options or two-factor authentication (because this is a temporary account).

- Do not sign up for free trials.

After a few moments, the Google Cloud console opens in this tab.

Vector Search

There are a few key challenges when deploying Generative AI applications that leverage large language models (LLMs):

- Integration with existing systems

- Scalable product knowledge

- Mitigating hallucinations (inaccurate responses)

These challenges can be mitigated to an extent through the use of grounding techniques via knowledge based systems using embeddings and vector databases.

What are embeddings?

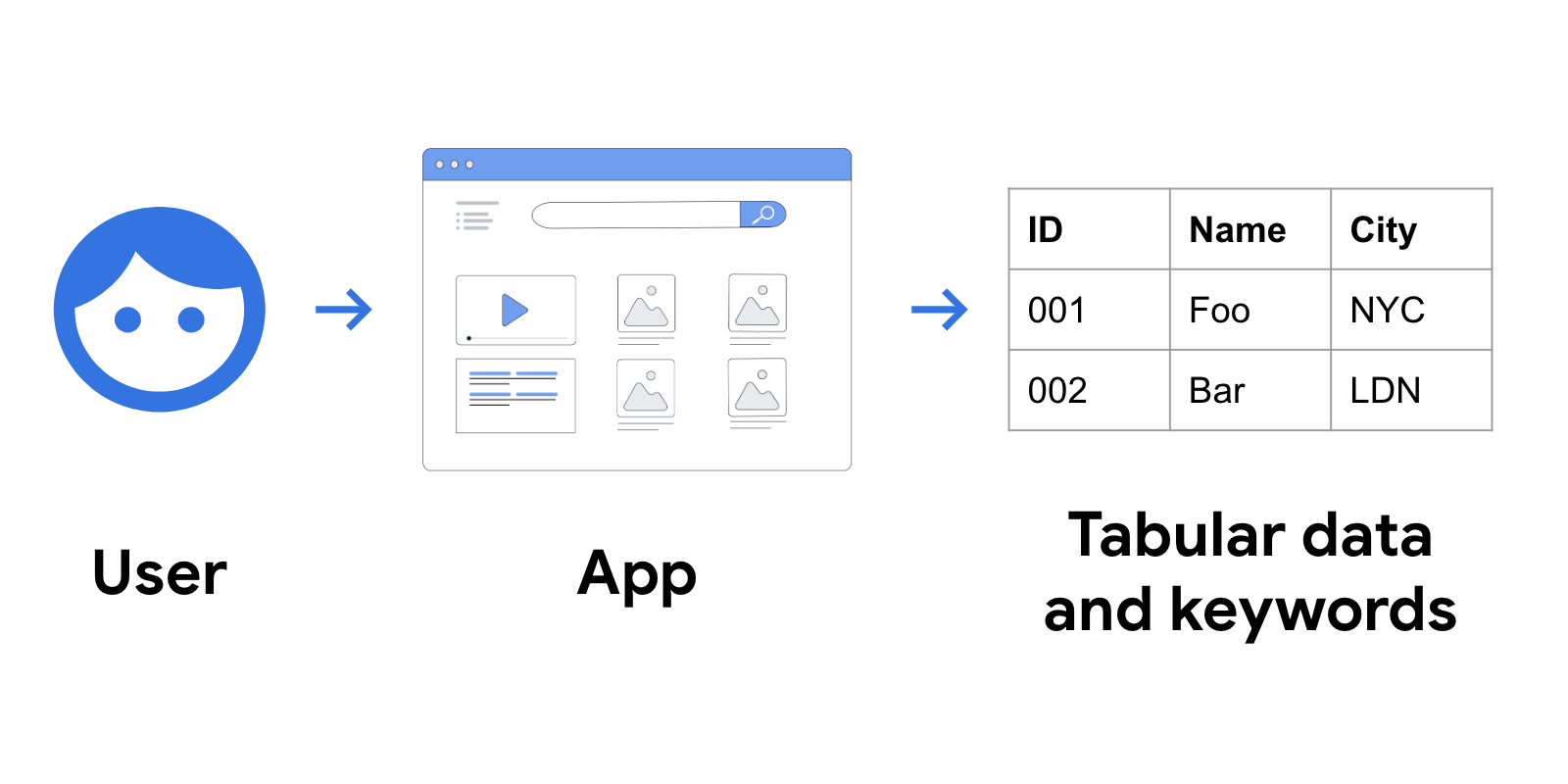

In traditional IT systems, most data is organized as structured or tabular data, using simple keywords, labels, and categories in databases and search engines.

This may look similar to the following figure.

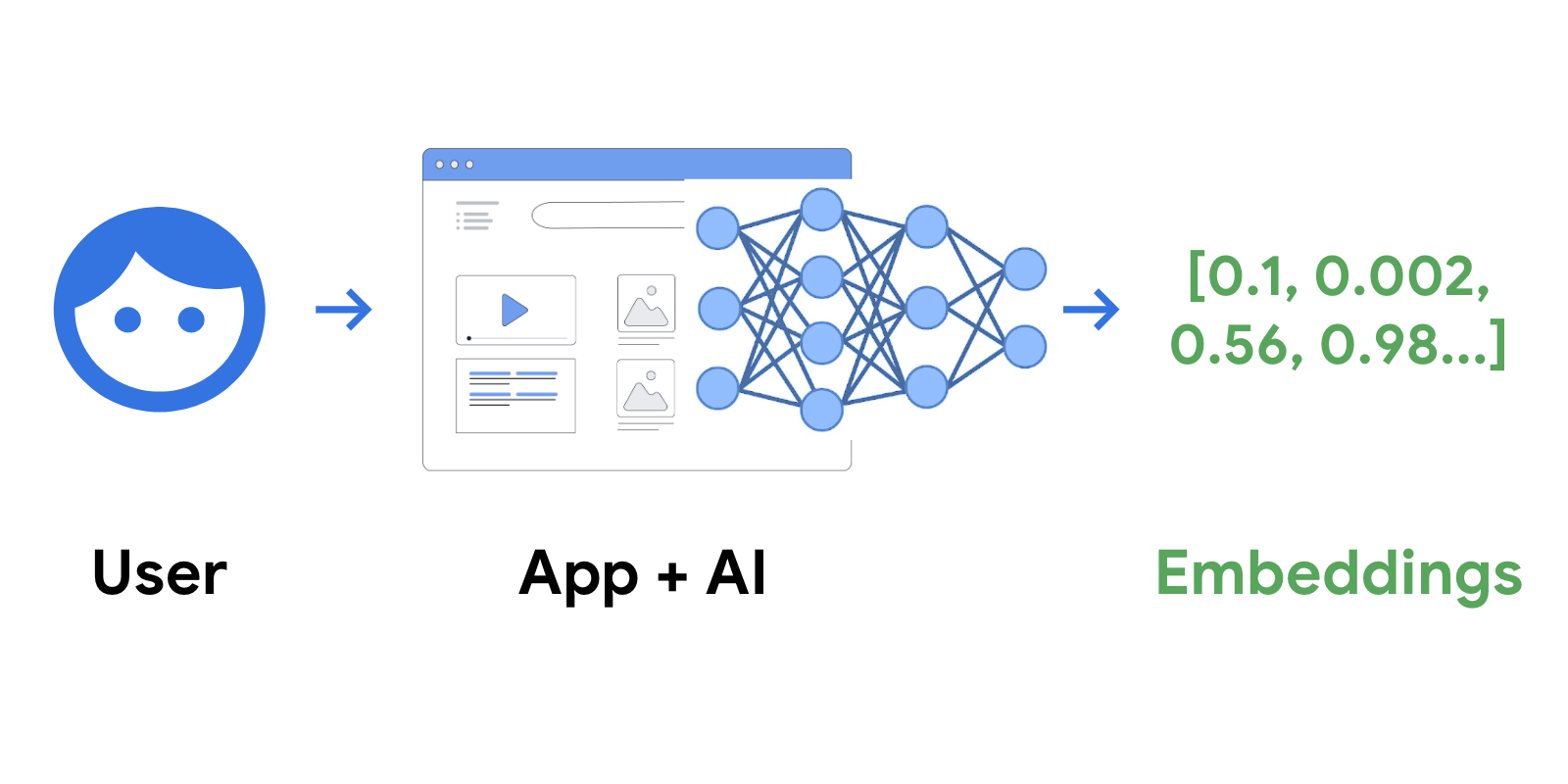

In contrast, AI-powered services arrange data into a simple data structure known as "embeddings". Embeddings are a numerical, floating point representation of data in a vector format.

Once trained with specific content like text, images, or any content, AI creates a space called an "embedding space", which is essentially a map of the content's meaning.

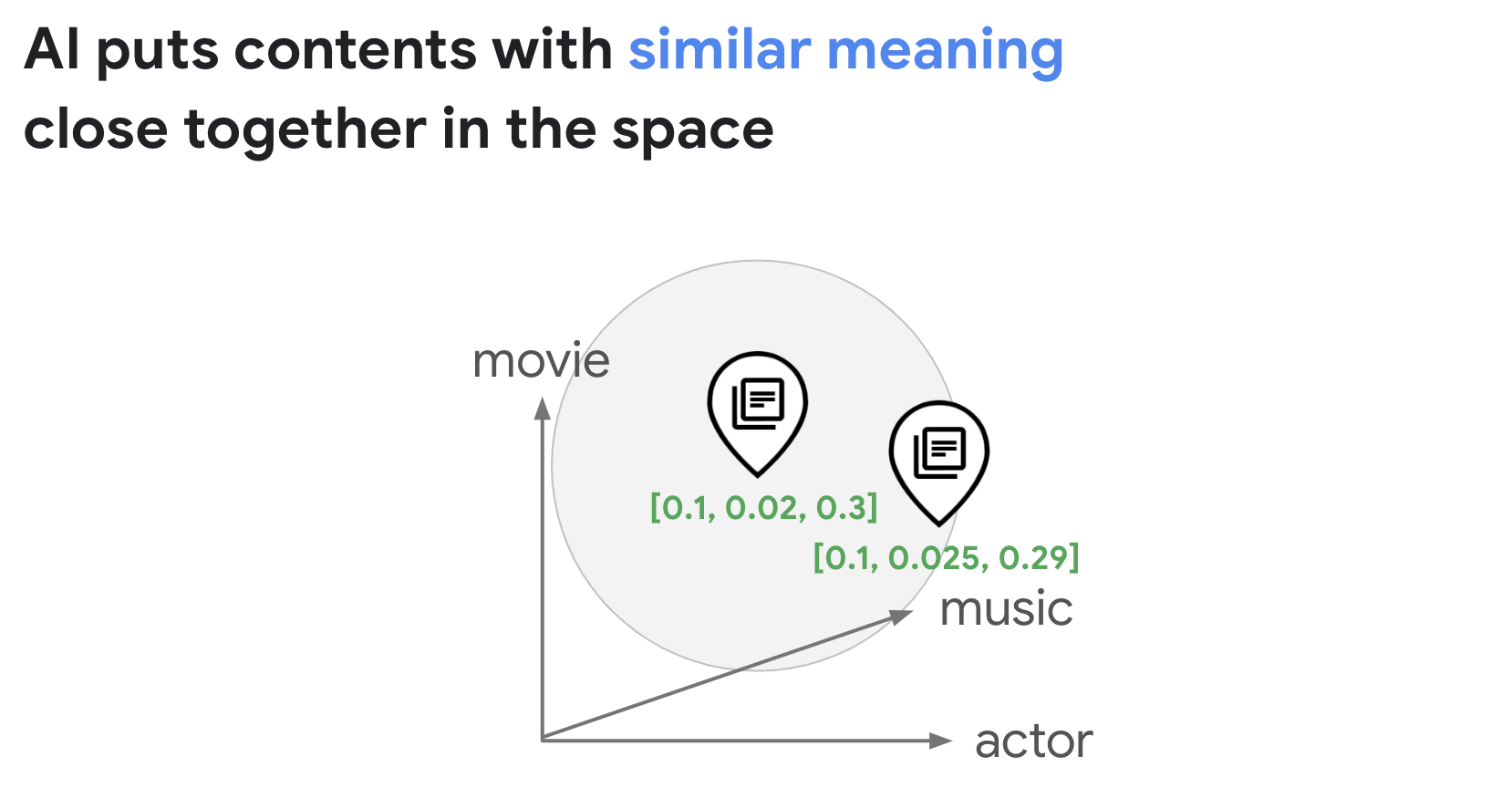

AI can identify the location of each content on the map, that's what embedding is. Let's take an example where a text paragraph discusses movies, music, and actors, with a distribution of 10%, 2%, and 30%, respectively. In this case, the AI can create an embedding with three values: 0.1, 0.02, and 0.3, in 3 dimensional space.

AI organizes data into embeddings, which represent what the user is looking for, the meaning of contents, or many other things you have in your business. This creates a new level of user experience that is becoming the new standard.

Finding embedding similarities

Once embeddings are generated by a LLM, they can be stored in a vector database for retrieval at a later time. Vector stores facilitate querying embeddings for similarities to questions posed by end users.

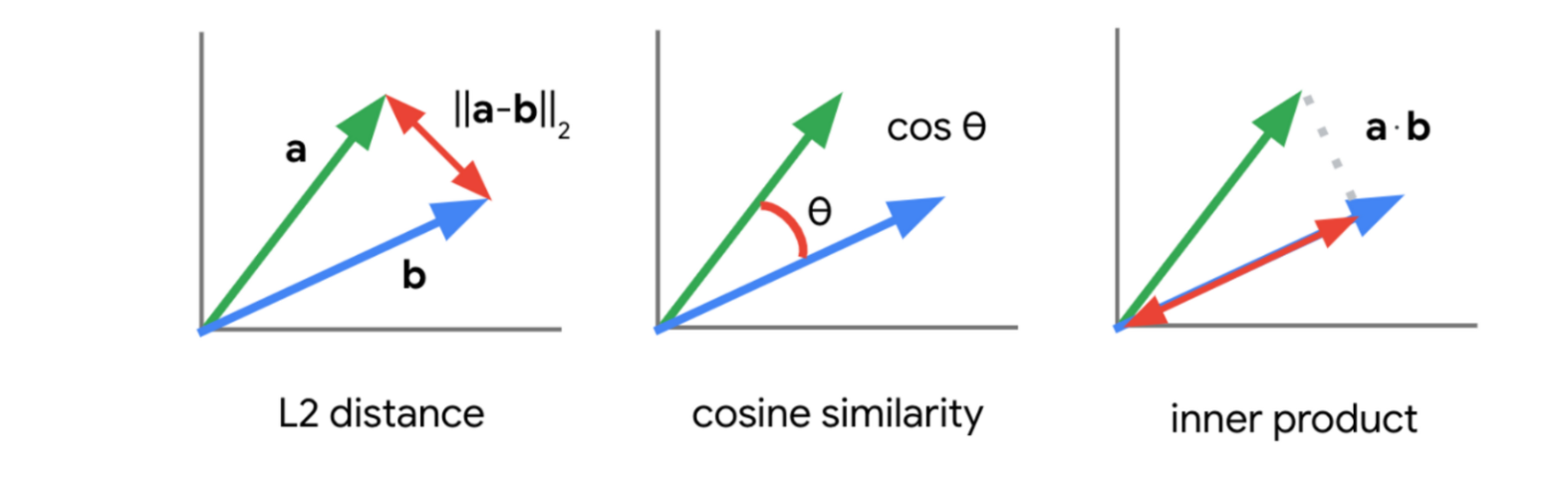

There are a few methodologies that can be used to determine vector similarities:

In this lab, you will use the inner product (also known as the dot product distance) to find similarities between vector embeddings.

Using this methodology, a query submitted to a LLM is embedded as a vector and used in its embedding representation to find similar documents indexed by the vector store. The results returned are used as context to prevent the LLM from hallucinating and providing more factual results. This technique is known as Retrieval Augmentation Generation.

What is Vertex AI Vector Search?

Google Cloud developers can take the full advantage of Google's vector search technology with Vertex AI Vector Search (previously called Matching Engine).

With Vertex AI Vector Search, developers can add the embeddings to an index and issue a search query for blazing fast vector search. Vertex AI Vector Search is capable of searching billions of embeddings with sub millisecond retrieval times.

Task 1: Vertex AI Workbench

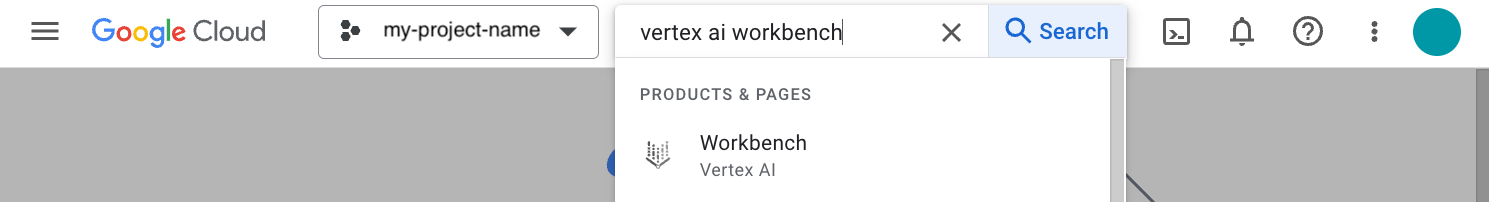

In your Google Cloud project, navigate to Vertex AI Workbench. In the top search bar, enter Vertex AI Workbench of the Google Cloud console.

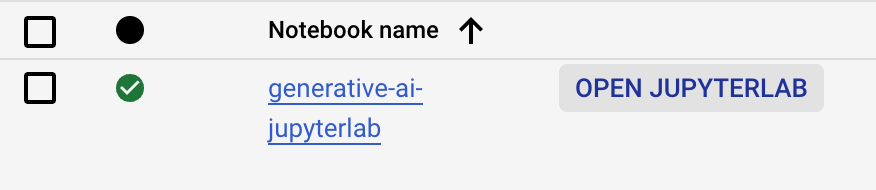

- Go to User-managed-notebooks.

- Click Open JupyterLab for

generative-ai-jupyterlab. - The JupyterLab will run in a new tab.

Task 2. Open the Jupyter Notebook

You will use a pre-installed Jupyter notebook to run the steps of this lab.

- Click on the

gemini_langchain_vector_search_rag.ipynbfile in the left file explorer. - Run through the Initial Setup section of the notebook.

- Set the

PROJECT_IDvariable to.

- Set the

- Follow the steps in the notebook and run each cell one at a time.

Click Check my progress to verify the objective.

Click Check my progress to verify the objective.

Click Check my progress to verify the objective.

Click Check my progress to verify the objective.

Click Check my progress to verify the objective.

Congratulations!

You successfully loaded the Vertex AI documentation using a URLLoader made available from LangChain, created embeddings from the documents loaded, then created and deployed an index store on Vertex AI Vector Search to query the embeddings of the documentation pages for use in a Generative AI application built with LangChain.

The application used the results from queries to the Vector Search index to supply as context to queries issued by an end user to ground results, preventing hallucinations, using a technique called Retrieval Augmentation Generation. Congrats!

Next steps / learn more

- Check out the Generative AI on Vertex AI documentation.

- Learn more about Generative AI on the Google Cloud Tech YouTube channel.

- Google Cloud Generative AI official repo

- Example Gemini notebooks

Google Cloud training and certification

...helps you make the most of Google Cloud technologies. Our classes include technical skills and best practices to help you get up to speed quickly and continue your learning journey. We offer fundamental to advanced level training, with on-demand, live, and virtual options to suit your busy schedule. Certifications help you validate and prove your skill and expertise in Google Cloud technologies.

Manual Last Updated May 24, 2024

Lab Last Tested May 24, 2024

Copyright 2024 Google LLC All rights reserved. Google and the Google logo are trademarks of Google LLC. All other company and product names may be trademarks of the respective companies with which they are associated.